Anatomy of an AI Agent

By:César Medina

Contact: cesar.medina@innovox.com.br

- 5 minutes read - 952 wordsArticle 3 of the Agentic AI Series: Systems that Perceive, Decide, and Act

< Previous article | Next article >

Most production issues with AI agents get blamed on model limits. In practice, the problem is usually architectural. The loop is poorly designed, not well constrained, or not executed in a reliable way.

If you want agents that actually work in production, you need to understand how they’re structured before writing any code.

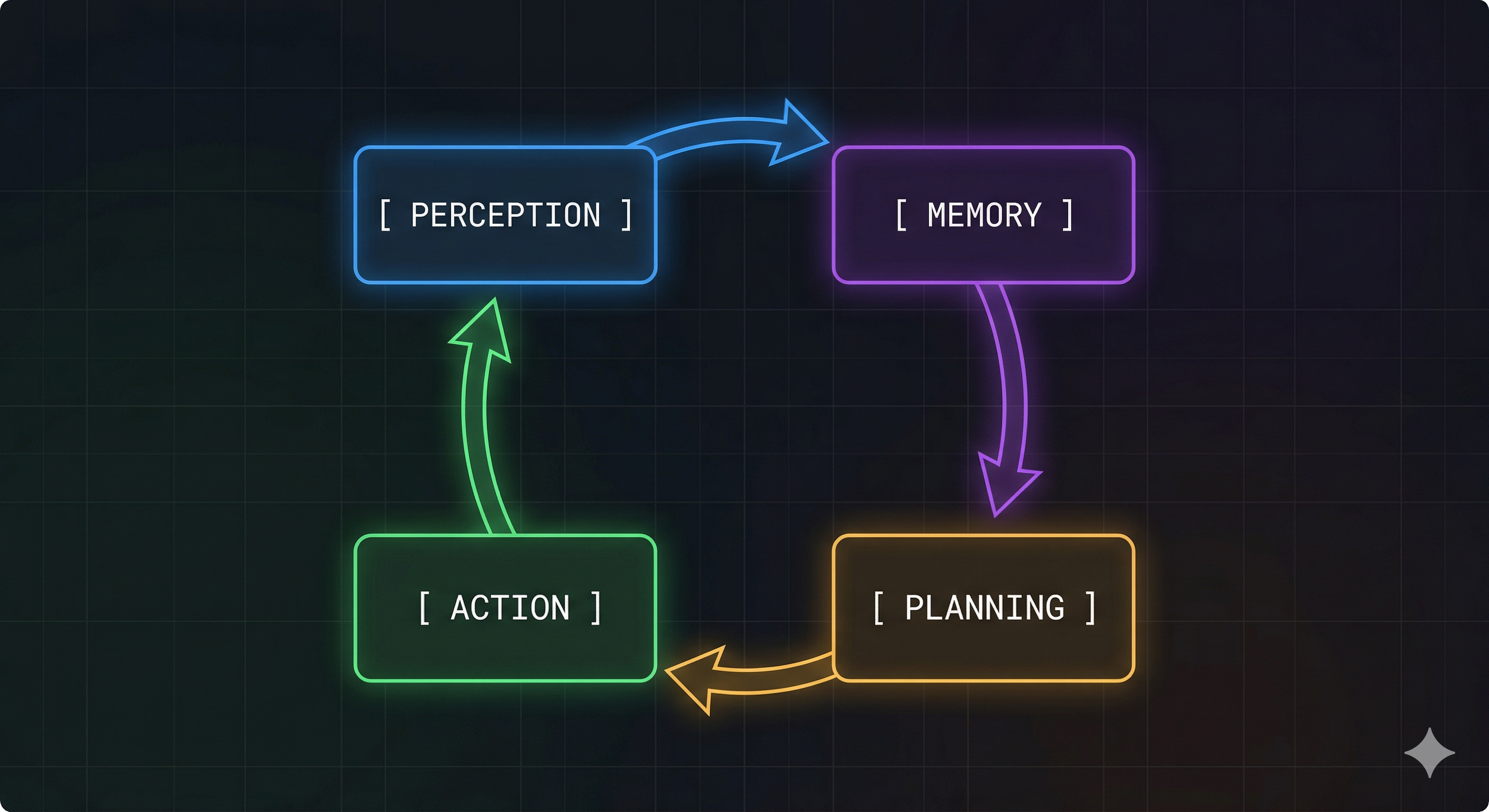

The 4 Fundamental Pillars

Every AI agent, no matter the framework or model, is built on four core components. Once you understand them, it becomes much easier to make solid design decisions and debug issues when things break.

1. Perception

Perception is how the agent connects to the outside world. It’s often described as input, but that misses the point. It’s really an interface with clear expectations.

Strong systems never pass raw data straight into reasoning. They rely on structured, validated, and filtered inputs. That means you need to think carefully about:

- How input is structured into something consistent

- How ambiguity and format issues are handled early

- What information is worth keeping and what should be ignored

Perception can come from text, APIs, events, files, or databases. What matters most is the format, the guarantees behind it, and how reliable it is.

If perception is messy, everything that follows will be too. The model can’t fix input it never understood correctly.

2. Memory

Memory is often overlooked, and it’s where many systems fall apart.

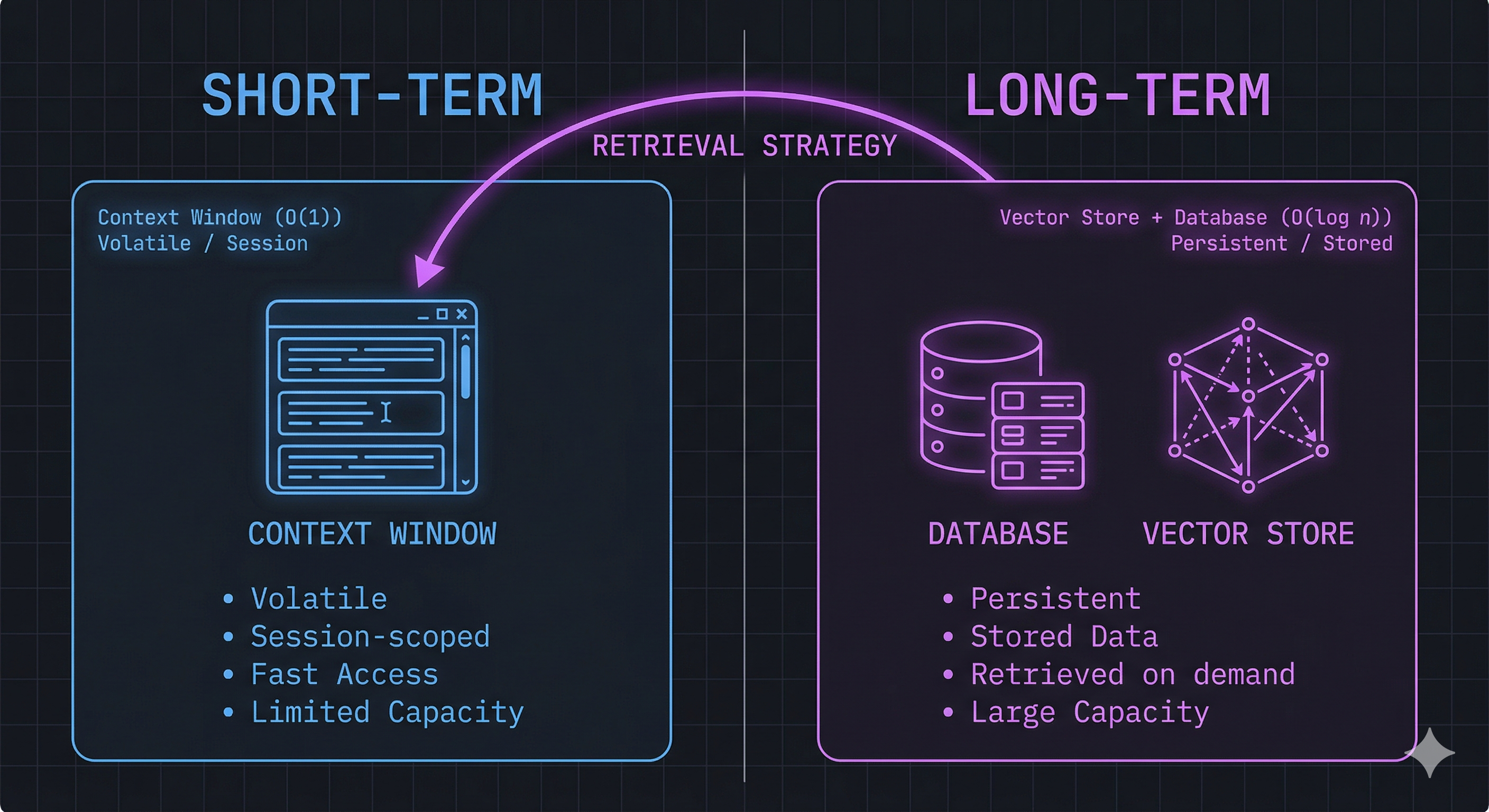

There are two main types:

Short-term memory lives inside the context window. It’s fast and easy to use, but limited and temporary.

Long-term memory is stored outside the model, in databases or vector stores, and gets pulled in when needed.

A common mistake is treating the context window as real memory. It isn’t. It’s just a working area.

The real challenge is retrieval. Saving everything is easy. Getting the right information at the right time is not. Poor retrieval leads to cluttered context, higher costs, and worse reasoning.

You need to define:

- How information is retrieved

- What gets retrieved versus what stays stored

- How to balance speed with relevance

3. Planning

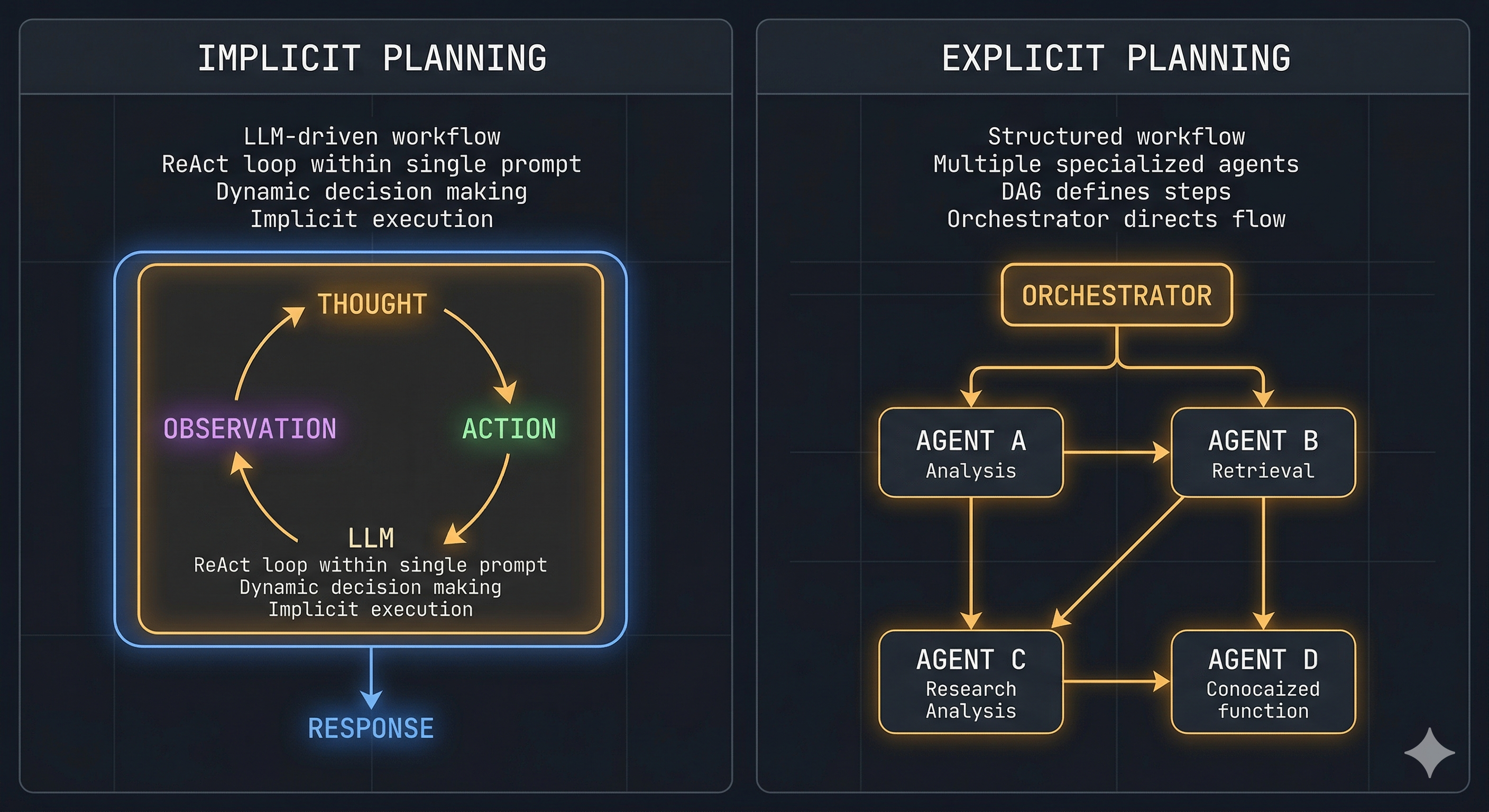

Planning is where the system decides what to do next. It sits between understanding and acting.

There are two ways this usually happens.

Implicit planning happens inside the model. The LLM decides the next step based on the prompt. This is flexible and simple to set up, but it’s hard to control and even harder to debug.

Explicit planning lives in the system design. The flow is clearly defined using structures like task graphs, state machines, or multiple agents working together.

Systems that rely only on implicit planning often work in demos but struggle in real environments. Explicit planning adds structure, makes behavior easier to observe, and gives you more control.

That shift, from implicit to explicit, is often what separates something that works once from something that works consistently.

4. Action (Tools)

Tools are what allow the agent to do real work. These can be APIs, database queries, code execution, browsing, or file operations.

More tools increase what the agent can do, but they also introduce more risk. Every tool call can fail.

Unlike text generation, tool usage has consequences. A bad call can break data, trigger unwanted actions, or block progress.

Because of that, tool usage needs guardrails:

- Validate inputs before execution

- Handle failures and retries properly

- Define fallback behavior

- Add human checks when the stakes are high

This is where the agent interacts with the real world, so it needs the most care.

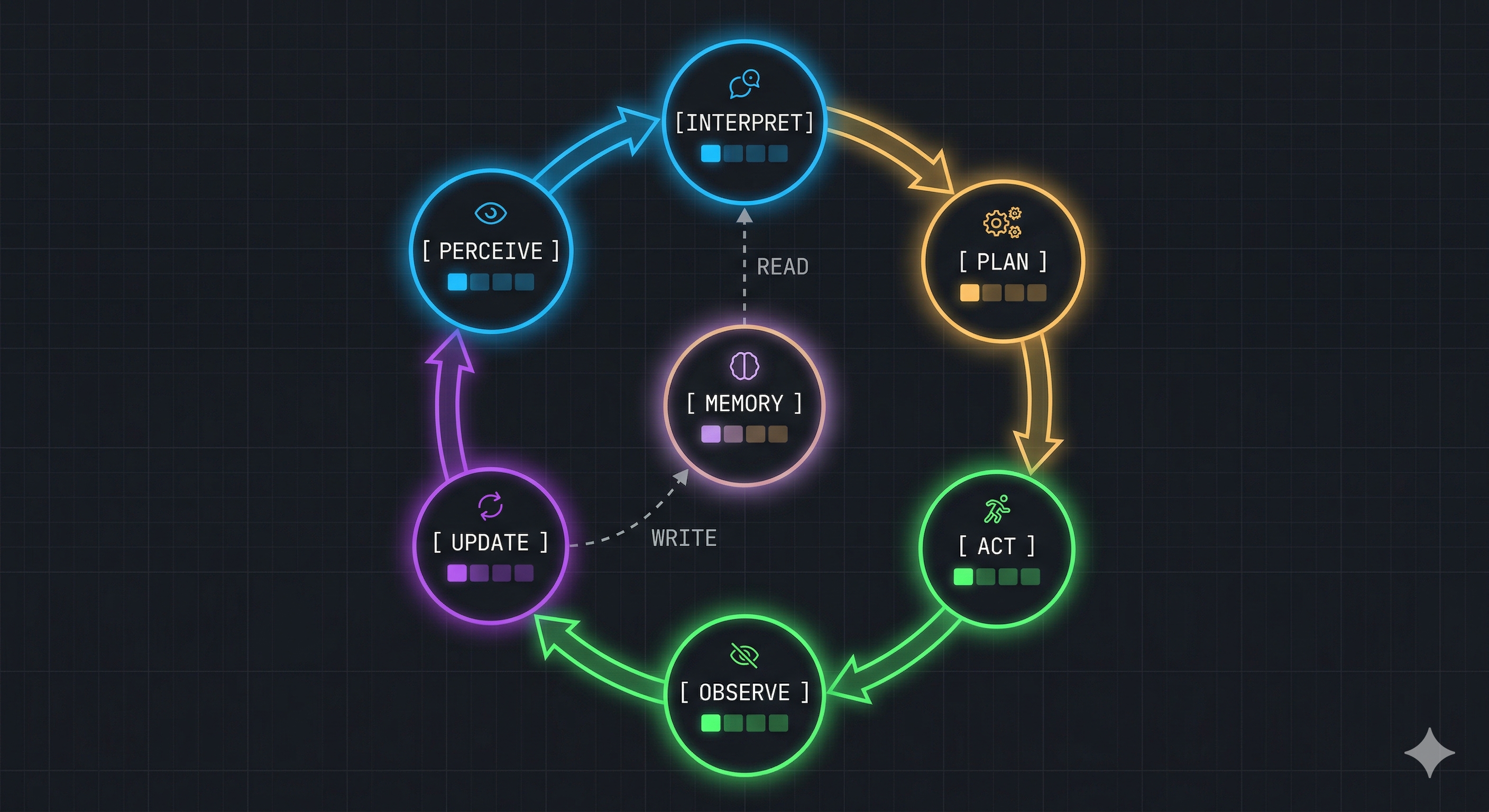

The Complete Flow: A Closed-Loop System

Agents don’t operate in a straight line. They run in a loop where each action affects what happens next.

Perceive → Interpret → Plan → Act → Observe → Update → Repeat

The update step matters more than it looks. That’s where memory is written before the next cycle. Without it, the agent behaves as if it forgets everything between steps.

This loop continues until the task is done, a stopping condition is reached, or the system fails.

Control doesn’t come only from the model. It comes from how the model, memory, tools, and environment interact over time.

People often call this the ReAct loop, but in practice it generalizes to a broader class of agentic control loops. The loop runs until the goal is reached, a stop condition triggers, or the agent reports a failure. Control flow does not come only from the model; it results from the interaction between model, memory, tools, and environment.

Failure Modes

Each part of the system tends to fail in its own way:

- Perception issues lead to confident but incorrect reasoning

- Memory problems cause repetition or loss of context

- Weak planning results in loops or stuck tasks

- Poor tool handling leads to real-world errors

Most failures trace back to one of these areas, even if they get blamed on the model.

The model is just one part of the system. What really matters is how everything works together.

Conclusion

Agents are not linear systems. They are cyclical.

If you design them as simple pipelines, they will break when the environment becomes unpredictable. Systems that can observe outcomes, adjust, and try again are the ones that hold up.

Each loop improves the agent’s understanding by replacing guesses with actual feedback.

That’s what makes agent-based systems different from traditional software. Behavior isn’t fully predefined. It emerges from interaction.

This is the third article in a series on agentic AI, systems that perceive, decide, and act. It’s technical enough for developers, but still accessible if you’re just getting started.

< Previous article | Next article >

InnoVox engineering team

Engineers focused on building reliable AI systems