Types of AI Agents: Reactive, Planners, and Autonomous

By:César Medina

Contact: cesar.medina@innovox.com.br

- 8 minutes read - 1525 wordsArticle 5 of the Agentic AI Series: Systems that Perceive, Decide, and Act

In the previous articles of this series, we looked at what an agent is, how it differs from a simple language model, and which components form its anatomy. Now it’s time to answer a practical question: When you say “AI agent,” which type are you talking about?

The answer matters. A reactive agent and an autonomous agent might use the same underlying language model, but they behave in completely different ways. Confusing the two leads to wrong expectations, inadequate architectures, and unnecessary frustration.

Agent Classification

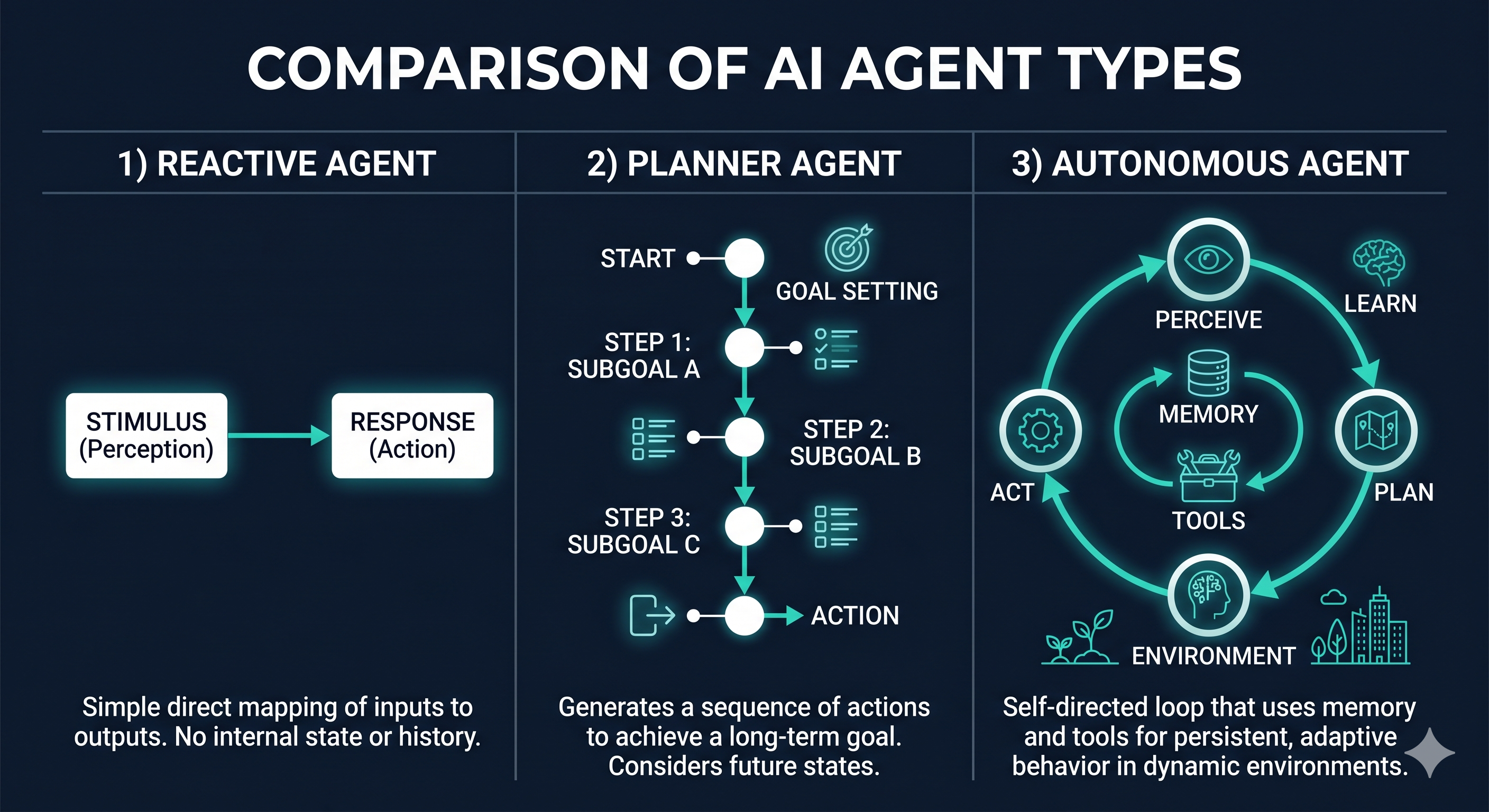

There is no official taxonomy for AI agents. Different researchers and frameworks use different terms for similar concepts. However, in practice, three categories cover the vast majority of systems you will encounter or build:

- Reactive Agents (rule-based or reflexive)

- LLM-driven Agents

- Planning Agents (multi-step planners)

These categories are not mutually exclusive and can be combined. A real agent often mixes characteristics of all three. However, understanding each separately clarifies your reasoning.

1. Reactive Agents

What they are

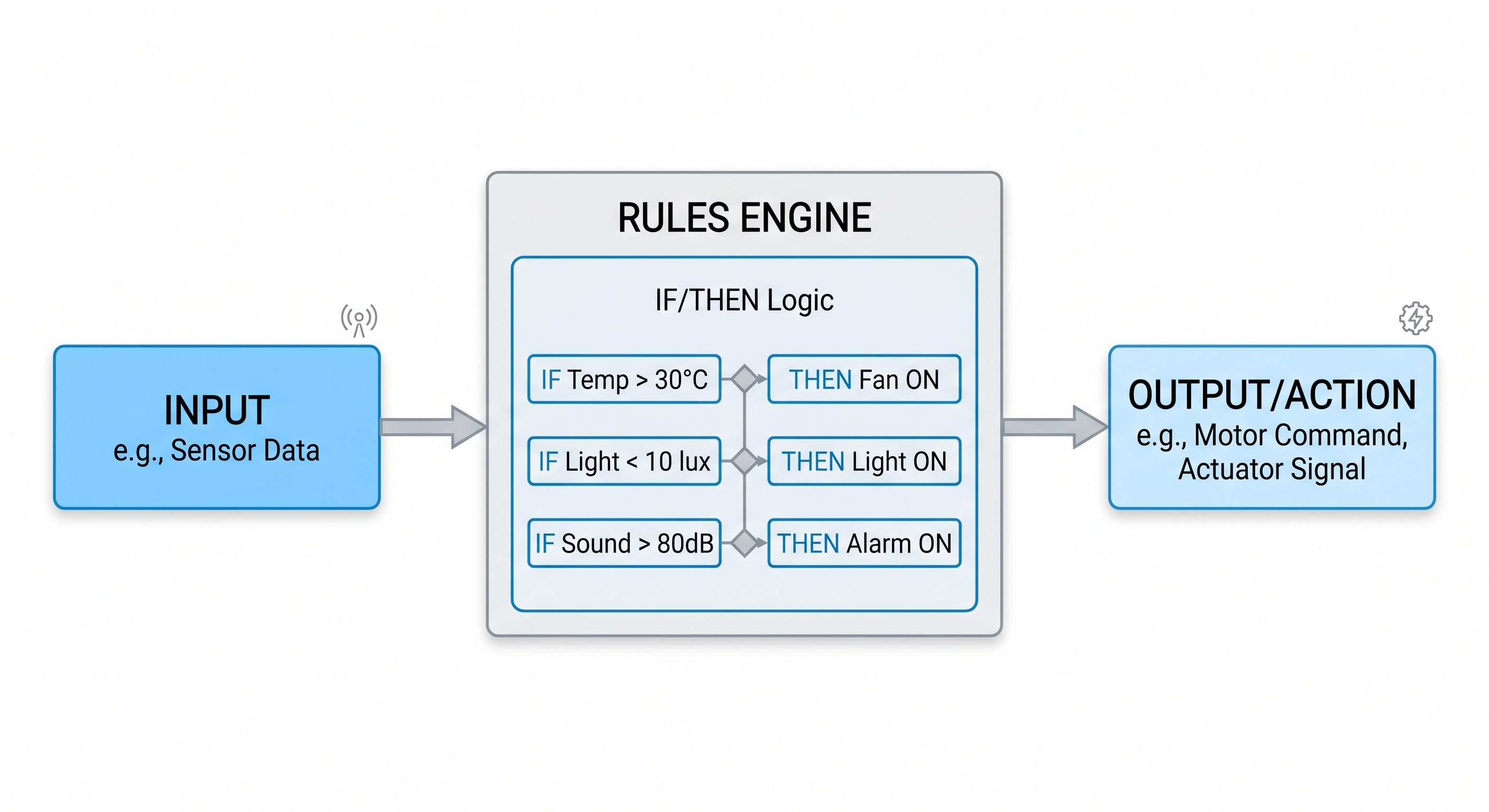

Reactive agents respond to environmental stimuli with predefined actions. They don’t plan. They don’t reason about future consequences. They receive an input, consult a set of rules, and execute the corresponding action.

The core logic is simple:

IF condition X → THEN action Y

How they work in practice

Think of a customer service chatbot that identifies keywords and redirects to departments. Or an automation script that monitors logs and triggers alerts when it detects an error pattern. Or even an email assistant that sorts messages by sender and applies labels.

None of these systems “think.” They react.

Strengths

- Predictable: Behavior is deterministic. For input X, output Y is always the same.

- Fast: No reasoning overhead. Latency is low.

- Easy to audit: You can inspect every rule and understand the behavior without surprises.

- Reliable in controlled contexts: In environments where the set of possible situations is finite and well-mapped, rules work very well.

Limitations

The problem arises when the world becomes more complex than the anticipated rules. If the situation doesn’t fit any rule, the agent doesn’t know what to do. It doesn’t generalize. Every exception requires a new rule, and soon the system becomes a labyrinth of conditions.

Additionally, rules are static. They don’t learn from new situations and don’t adapt behavior based on historical context.

Reactive agents are great when the problem is well-defined. They are fragile when the problem has significant variation.

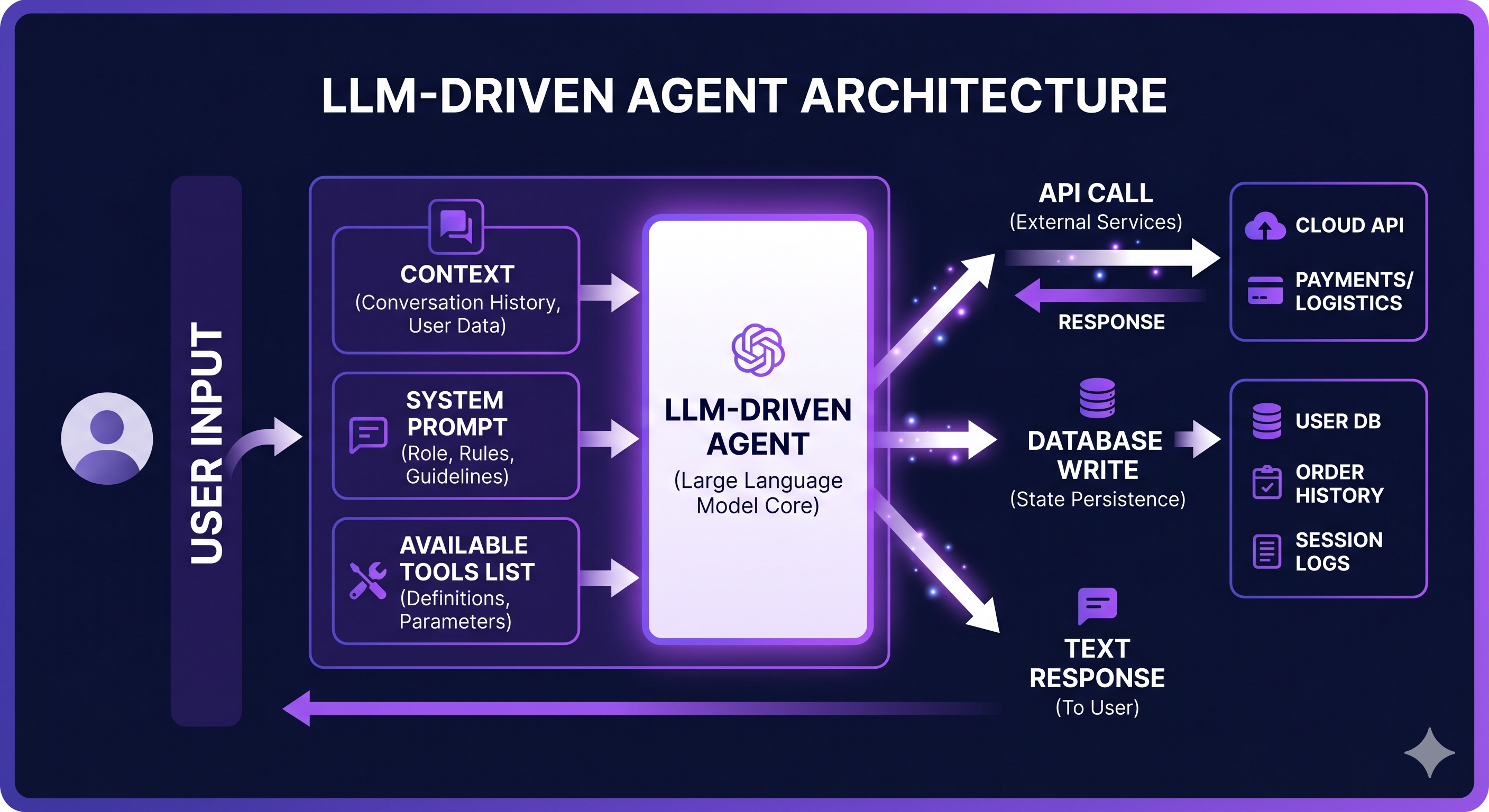

2. LLM-driven Agents

What they are

This is where the language model enters as the decision engine. Instead of fixed rules, the agent uses an LLM to interpret the situation and decide what to do. The intelligence shifts from the code to the model.

The basic structure changes to:

input → LLM (reasoning) → action

How they work in practice

The agent receives an input (text, data, context), sends it to the model with a prompt describing the available tools and the objective, and executes the action the model indicates. The result could be calling an API, writing a file, answering a question, or anything else the agent’s tools allow.

The leap from the reactive model

The crucial difference is that the LLM generalizes. It was trained on an enormous amount of text and learned reasoning patterns. This means it can handle situations that weren’t explicitly programmed, interpret ambiguous language, and adapt the response to the context.

An LLM-driven agent can receive the request “I need a table comparing the three plans of our competitors” and know that this involves searching for data, structuring a table, and formatting the response, without any specific rule having been written for that case.

Limitations

Generalization comes at a cost: unpredictability. The same input can generate slightly different outputs. The model can “hallucinate,” make reasoning errors, or misinterpret intent.

Furthermore, simple LLM-driven agents are still essentially reactive in their structure: they receive an input and produce a response. The difference is that the decision engine is probabilistic rather than rule-based. For tasks requiring multiple coordinated steps over time, this is not enough.

3. Planning Agents (Multi-step Planners)

What they are

Planners are agents that build a plan before acting. They decompose a complex goal into subtasks, execute each step, observe the results, and adjust the plan as necessary.

The cycle that defines them is:

goal → plan → action → observation → plan revision → next action → ...

This loop continues until the goal is reached or the agent concludes it cannot proceed.

How they work in practice

Imagine asking an agent: “Research our product’s top three competitors, compare prices, and generate a PDF report.”

A reactive agent wouldn’t know what to do. A simple LLM agent would try to answer everything at once, likely making up data.

A planning agent would do something like this:

- Identify that the task has three subtasks: research, comparison, and document generation.

- Use a search tool to collect information on each competitor.

- Structure the collected data into a comparative table.

- Call a PDF generation tool with the structured content.

- Verify if the PDF was generated correctly and report the result.

If in step 2 a competitor doesn’t have publicly available pricing info, the agent revises the plan: it tries another source or records this gap in the report instead of inventing a number.

Frameworks implementing this pattern

Several modern frameworks are built around this planning model:

- ReAct (Reasoning + Acting): The model alternates between reasoning about the situation and executing actions, producing an explicit thought trace before each action.

- Plan-and-Execute: The agent generates a full plan before starting execution, and a second stage monitors the execution.

- LangGraph, AutoGen, CrewAI: Frameworks that implement state graphs where each node represents a plan step and edges represent conditional transitions.

We will explore each of these patterns in depth in future articles.

Why planners are different

The fundamental distinction is the time horizon. Reactive agents operate in the immediate present: I receive X, I do Y. Planners operate over sequences: to reach Z, I need to pass through A, B, and C, in that order, adjusting according to what happens at each step.

This ability to reason about a sequence of future steps is what allows planning agents to perform tasks that take minutes or hours, not just seconds.

Direct Comparison

| Feature | Reactive | LLM-driven | Planner |

|---|---|---|---|

| Decision Engine | Fixed Rules | LLM (one step) | LLM (multiple steps) |

| Generalization | None | High | High |

| Predictability | Total | Partial | Partial |

| Complex Tasks | No | Limited | Yes |

| Memory Usage | Rare | Optional | Essential |

| Latency | Very low | Medium | High |

| Token Cost | Zero | Low | High |

| Debug Ease | High | Medium | Low |

No category is absolutely better than the others. The choice depends on the problem.

When to use each type

The decision isn’t philosophical; it’s practical. Some questions help guide the choice:

Use a reactive agent when:

- The set of possible situations is finite and well-mapped.

- Response speed is critical.

- Auditability and predictability are mandatory requirements (e.g., regulated systems).

- Token cost matters a lot.

Use an LLM-driven agent when:

- Inputs vary widely and fixed rules don’t cover all cases.

- You need natural language as an interface.

- The task fits into a single turn of reasoning.

Use a planning agent when:

- The task involves multiple sequential steps.

- The agent needs to use several tools in coordination.

- The final goal cannot be reached in a single action.

- You need the agent to handle intermediate failures and adjust the plan.

The Reality: Hybrid Systems

In practice, production systems rarely use a single pure type. A real product might have:

- A reactive agent for quick request triaging (classification, routing, format validation).

- An LLM agent to interpret user intent and generate conversational responses.

- A planning agent to execute complex workflows in the background.

This composition allows each part of the system to use the most suitable agent type for its role. Speed where speed matters, reasoning where reasoning is needed, and planning where the task is long and uncertain.

In the next article in this series, we will move from theory to practice and build our first functional agent from scratch—with code, a real perception-action-observation loop, and integrated tools.

Summary

- Reactive agents operate by fixed rules. Fast, predictable, fragile outside mapped scope.

- LLM-driven agents use a language model as a decision engine. They generalize well but operate in a single turn.

- Planners decompose goals into steps, execute in a loop, and adjust the plan based on results. They are the most capable for complex tasks and the hardest to debug.

Choosing between them isn’t about which is more advanced; it’s about which solves your specific problem.

This is the fourth article in a series on Agentic AI—systems that perceive, decide, and act. It is technical enough for developers but still accessible to beginners.

Engineering team at InnoVox Engineers focused on building reliable AI systems